From Human-first to Agent-first Enterprise software

Why bounded autonomy, not workflows, becomes the new product value in AI-First Product design?

In November 2024, after watching Dharmesh Shah’s video on AI agents, I wrote a post trying to make sense of what I had just seen. A super impressive presentation.

AI Agents: From Research Powerhouse to Procurement Guru

At that point, Agents were still early, fragmented, and largely demo-driven. On the surface, it looked like a powerful demo. What i felt at that time was AI agents will not threaten enterprise software. They will threaten the assumptions it was built on.

Demo showed an AI agent researching a company, stitched together information from multiple sources, and pushed structured data into a CRM.

Clean. Fast. Impressive.

But the demo left me uneasy. The question that kept coming back was not “how good is this agent?”

It was: Why does the CRM even matter in this flow?

Because AI agents do not threaten enterprise software. They threaten the assumptions it was built on.

The quiet assumption behind today’s software

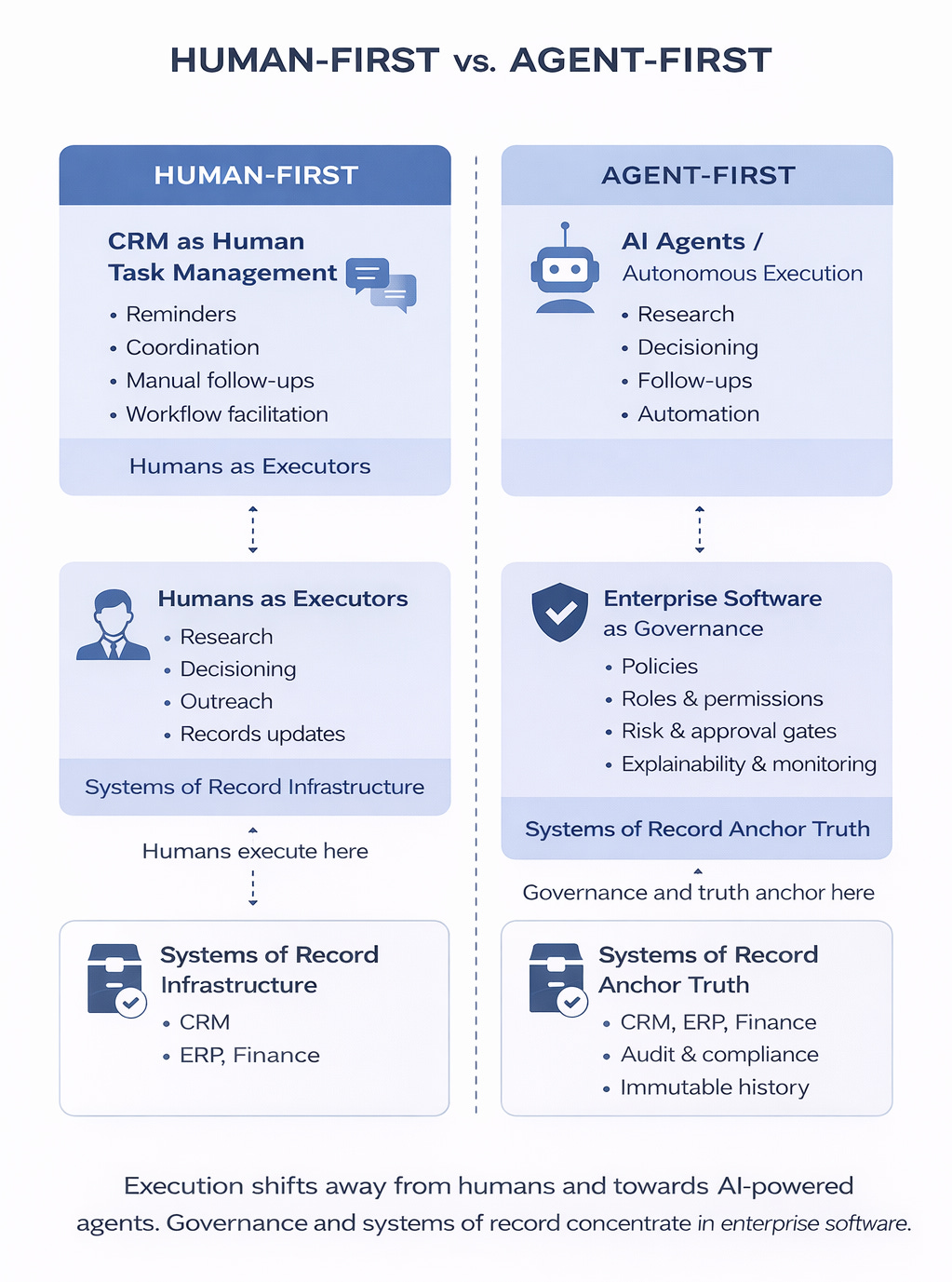

Most enterprise software, CRMs included, is built on a stable assumption.

Humans are the primary actors. Software exists to support them.

CRMs help humans remember, coordinate, follow up, and report.

Procurement tools help humans request, approve, negotiate, and document purchases.

Ticketing systems exist to triage, assign, and track human resolution.

Finance systems guide humans through approvals, reconciliations, and close cycles.

Dashboards exist because humans need visibility into what other humans are doing.

This raises an uncomfortable question.

If AI agents can research accounts, infer intent, decide next actions, execute follow-ups, and trigger outcomes autonomously, what is the CRM actually for?

This model has held for decades. AI agents challenge it, not loudly, but structurally.

That is where the old mental model quietly starts to fail. And since November 2024, the maturity of agent layouts has moved far faster than most of us expected.

What changed with AI agents since November 2024?

The agent in Dharmesh’s demo did not just assist.

It completed a unit of work.

It researched.

It structured information.

It updated a system of record.

That is a small step technically, but a large step conceptually. Because once an agent can do this reliably, the question becomes.

If the agent can do the work, why is the system optimised around human interaction?

Tools like Claude Cowork today make this question harder to avoid.

An AI that can read files, navigate tools, perform multi-step tasks, and execute work on your behalf shifts expectations.

Claude Cowork is important not because it chats better, but because it normalises autonomous execution in day-to-day work.

Once execution is normalised, expectations never go back. Not overnight, but permanently.

This does not automatically mean Invisible Enterprise Software

The easy conclusion is to say that enterprise software, including systems like CRM, will disappear, replaced by autonomous agents operating in the background.

That may happen in some form, some day. But that framing is too simplistic.

What is more likely is this.

The role of systems changes long before their relevance is questioned.

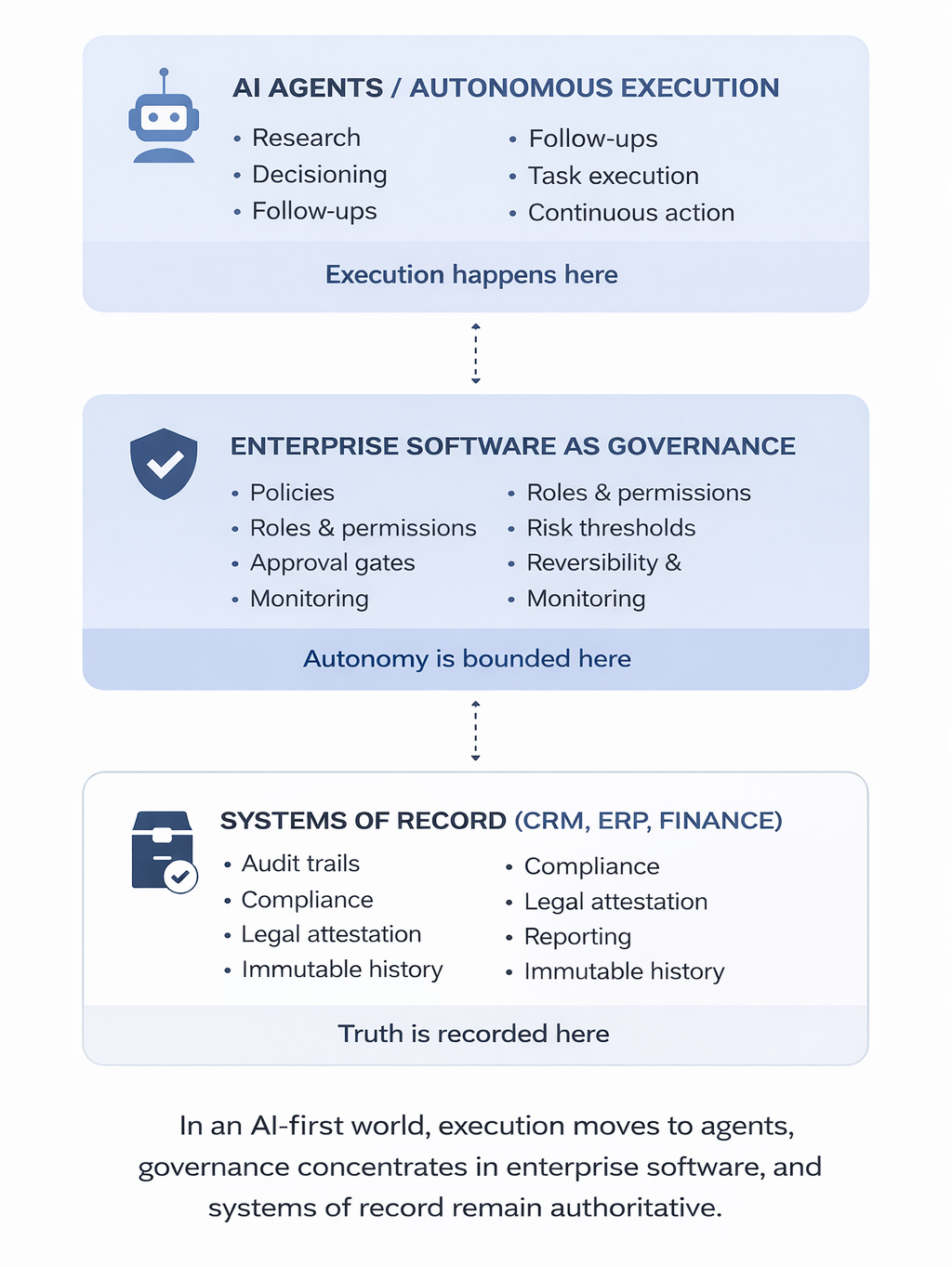

In an AI-first world, software is less about guiding humans through workflows and more about governing autonomous actions.

That is not the same as invisibility.

It is a shift in purpose. And purpose always outlives interfaces.

CRM as a Signal, not the Destination

CRM is a useful example because it sits at the intersection of data, workflow, decisions, and execution.

The system assumes humans are the primary actors and the CRM is the system of record for their actions.

What CRM historically called a “relationship” was not trust or rapport. It was a proxy for memory, continuity, and influence. CRM systems existed to compensate for human limits: forgetting context, missing follow-ups, losing track of intent, relying on subjective judgment, move deals forward, and coordinate activity.

AI agents challenge this assumption. Agents “do not have relationships” the way humans do.

They act on signals and patterns.

An agent can research accounts, infer intent, decide next actions, execute follow-ups, and determine when a deal should progress or stop. In that world, CRM is no longer the place where work is performed.

What we used to call a “relationship” shifts as a result.

Intent signals, behavioural history, response patterns, constraints, and trust thresholds become continuously updated system state, not personal memory.

A relationship becomes a state machine, not a bond.

IMHO, Systems like CRM will continue to exist as systems of record, because audit, compliance, and accountability demand it.

CRM, in this framing, is not the destination. It is a signal of how enterprise software roles are shifting.

The category name may survive. The mental model may not.

What this means for AI-first thinking in enterprise software?

An AI-first system is not just a system with AI features.

It is a redefinition of responsibility.

Execution increasingly shifts to agents.

Enterprise software increasingly governs that execution.

This does not imply invisible software or the disappearance of platforms like CRM.

Systems of record must remain authoritative because audit, compliance, and accountability demand it. This creates a hard boundary.

Agents may decide, propose, and execute workflows, but they must never directly mutate systems of record without passing through explicit governance gates.

No agent writes to a system of record without policy validation, permission checks, and audit capture. In this model, execution can be autonomous. Attestation cannot.

From a product perspective, this shifts what customers are actually buying.

They are not buying more workflows or better dashboards.

They are buying bounded autonomy, provable compliance, faster decisions without increased risk, and confidence that the system can act on their behalf without creating exposure.

Design priorities change accordingly.

APIs matter more than screens because agents interact programmatically.

Constraints matter more than flows because autonomy must be bounded.

Observability matters more than dashboards because understanding system behaviour matters more than tracking human activity.

Some software may become less interactive. Some will remain visible. The difference is not visibility, but authority.

What this shift means for us as Product and Engineering Leaders?

This transition is often framed as a talent or job-risk problem. I think that framing misses the real issue.

The deeper risk is continuing to design, build, and ship software under the assumption that humans are the primary actors.

From a product standpoint, this shift cannot be abrupt.

Customers will not hand over autonomy overnight.

Adoption will be incremental.

Hybrid modes will dominate, with humans staying in the loop longer than ideal.

Trust will be earned through consistency, transparency, and reversibility, not promised through demos.

If you are a Product Manager

The biggest risk is not losing your role.

It is shipping products that quietly lose relevance.

In an AI-first world, products built around human workflows, nudges, and step-by-step guidance serve a shrinking purpose. Adding AI features like summaries or copilots does not fix this.

Product value increasingly lies in:

Owning decisions, not flows

Defining what the system is allowed and not allowed to do

Deciding when autonomy must stop and escalate

Pricing based on outcomes, risk reduction, and authority rather than seats or clicks

Ignoring failure modes is especially dangerous. Agents make unhappy paths common, not exceptional. Reversibility, escalation, and kill switches are product responsibilities, not engineering afterthoughts.

If you are an Engineering Manager or Architect

The risk is not that AI replaces engineering work. The risk is building systems that cannot be safely trusted with autonomy.

Architectures designed for predictable human behaviour struggle when agents act continuously, chain actions rapidly, and explore edge cases at scale.

Engineering leaders must worry about:

Enforcing hard constraints rather than relying on conventions

Observability that captures intent and decision context, not just logs

Designing for reversibility through compensating actions and versioned changes

Controlling delegation, recursion depth, and task fan-out in multi-agent systems

Moving beyond UI-era RBAC toward capability-based, time-bound, and economic permissions

Both roles converge on the same responsibility.

Designing systems where autonomy is powerful but bounded.

If your architecture assumes humans are the primary executors, you are already accumulating risk.

Some systems will adapt to this shift. Others will need to be rebuilt, not extended. Everything else is implementation detail.

How do you know when agents cross the line?

One of the hardest questions in an AI-first world is simple and uncomfortable. How do you know when an agent has done something it was not supposed to do?

This cannot be solved by watching final outputs or reading chat transcripts. It requires deliberate system design.

In practice, this means:

Define policy as code. “Not allowed” must be machine-checkable. Allowed tools, actions, thresholds, data classes, and destinations need to be explicit and enforceable.

Route all agent actions through an execution gateway. Agents should request actions, not perform them directly. The gateway enforces policy checks, risk scoring, approvals, rate limits, and full logging.

Observe intent, not just outcomes. Log planned actions, attempted tool calls, and resulting side effects. Understanding why an action was taken matters as much as what happened.

Detect behavioural anomalies early. Watch for unusual sequences of tool calls, abnormal volumes, new destinations, or recursive task creation. Baselines matter more than raw alerts.

Constrain agent-to-agent communication. Whitelist channels and topics, cap recursion depth and task fan-out, and forbid delegation without explicit approval. Experiments like Moltbook-style multi-agent notebooks show how quickly agents can start coordinating, delegating, and amplifying behaviour if left unconstrained.

Install tripwires for high-risk actions. Vendor creation, spend changes, data exports, external communications, and permission grants should always trigger escalation or review.

Design for reversibility. Assume failure. Support rollbacks, compensating actions, quarantines, and kill switches so mistakes do not become irreversible events.

These controls are not defensive paranoia. They are the minimum requirements for running autonomous systems safely at scale. Without them, autonomy is just deferred failure.

Ending where we should, not where it is comfortable

The original post was not an attempt to predict the end of enterprise software categories.

It was an attempt to revisit first principles.

In an AI-first world, the organising question is no longer how efficiently humans can work inside systems. It is how much autonomy organisations are willing to delegate, and under what constraints.

The north star is not maximum automation. It is maximum autonomy that the organisation is comfortable defending to auditors, customers, and regulators.

The names of enterprise systems may stay the same. Their role may not.

The real task for product and engineering leaders is not choosing the next platform. It is deciding where authority lives.

Happy Learning!